Limiting Concurrent TCP Connections in Rust with Tokio's Semaphore

While building a TCP server (TcpListener) in Rust, I needed to process connections one at a time. I kept noticing that new connections were being accepted but never actually processed, they just hung in standby until the client gave up and disconnected. In this post, I will walk through a minimal TCP server that uses a Semaphore to force max concurrent connections.

Examples: Sequential vs. Connection-Limited Server

To understand the problem properly, we’ll build this in two steps. First, a simple sequential server with no concurrency and no connection limiting, just enough to see the issue in action. Then we’ll evolve it into a proper async server that handles connections concurrently while enforcing a hard cap on how many connections can be active at the same time.

Sequential Connections With No Connection Limitation

This is the starting point: a bare TCP server with no connection limitation at all. It binds to port 8999 and enters an infinite loop, waiting for clients. When one connects, it reads whatever data they send and prints it to the console. Once that client disconnects, it moves on to the next one.

1 |

|

1 |

|

The limitation here is that everything is sequential. The server is fully blocked on the current connection until it closes.

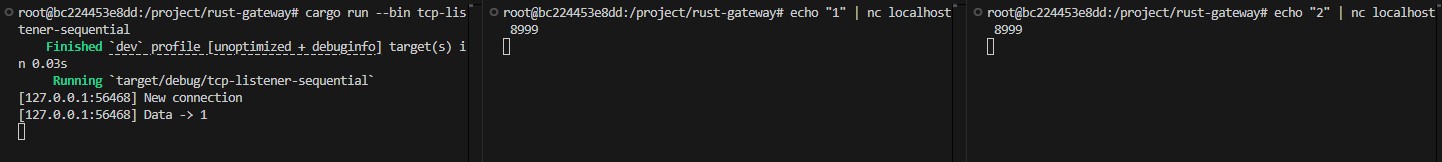

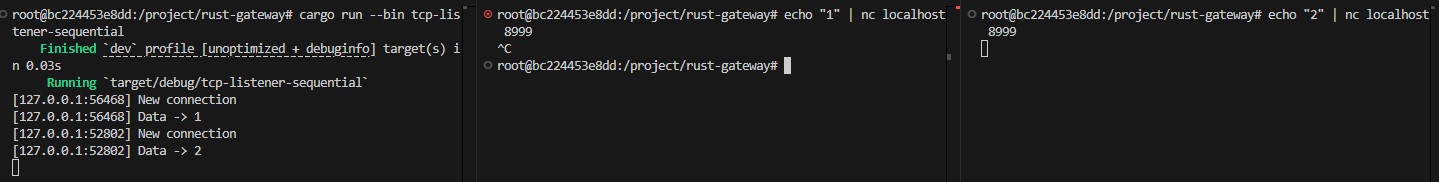

Here is an example:

- Two clients connect at the same time. Only the first one gets processed, the second just sits there waiting

- First client disconnects, the server moves on and the second connection finally starts being handled

The second client doesn’t get any attention until the first one is done. Right now there’s nothing stopping multiple clients from connecting simultaneously and sitting idle, consuming resources while they wait. That’s the problem we’re about to solve.

Async Connections With Connection Limitation

Now we add the actual connection limit. Two things changed from the last version:

- Each connection is handled inside a

tokio::spawn, meaning connections are no longer sequential. The server can handle new ones while others are still being processed. - Semaphore is created with a fixed

MAX_OPENSOCKETS. When a new connection arrives, it immediately tries to grab a permit withtry_acquire. If one is available, the connection is processed normally. If not, the connection is rejected/closed and the task exits.

1 |

|

When the task finishes, whether the client disconnects cleanly or hits an error _guard drops automatically and the permit is returned to the semaphore, freeing up the slot for the next connection.

The Arc wrapper is just what allows the semaphore to be safely shared across multiple tasks without copying it.

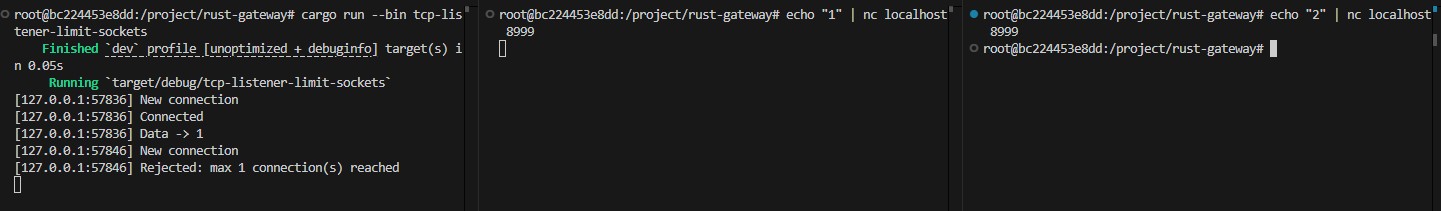

Now, looking at the following example, we can see that when a first client connects to the server and a second client tries to connect at the same time, it gets rejected and the connection is closed immediately.

Conclusion

This whole journey started with a wrong assumption on my part: I thought that in a sequential program, any new connection attempt would simply be rejected if socket.accept() hadn’t been called yet. It turns out the OS queues incoming connections at the TCP level regardless, so they pile up silently even if your code isn’t ready for them.

With a Semaphore and a few lines of Rust, you get a hard cap that’s automatic, safe, and impossible to leak thanks to Rust’s ownership model.